How AI Agents and Claude Skills Work: Build Better Workflows With Less Context

Most people get weak results from AI agents for one reason: they keep stuffing more instructions into the system and hoping the model gets smarter. In practice, that usually creates slower runs, higher token usage, and worse outputs.

The better approach is not to add more noise. It is to give the agent the right context at the right time, then turn proven workflows into reusable skills.

If you want Claude or any coding agent to produce more reliable work, the goal is simple: keep permanent context minimal, teach workflows through real execution, and only codify what has already worked.

Where Most Builders Lose Money

- Overloading context: Huge agent instruction files add tokens on every turn, even when most of that information is not needed.

- Creating skills too early: Many people write skill files before the workflow has been tested, which leads to brittle automation.

- Using generic instructions: Telling an agent obvious things like the framework or basic conventions wastes context that could be used for real task execution.

- Scaling too fast: Adding sub-agents, tools, and complex setups before a core workflow works creates more failure points.

- Ignoring failure loops: When an agent fails, many users restart from scratch instead of using the error to improve the workflow and update the skill.

The System in One Sentence

Use minimal always-on context, teach the agent a workflow through guided iteration, and convert successful runs into skills that are loaded only when needed.

Step 1: Understand What Actually Fills an Agent’s Context

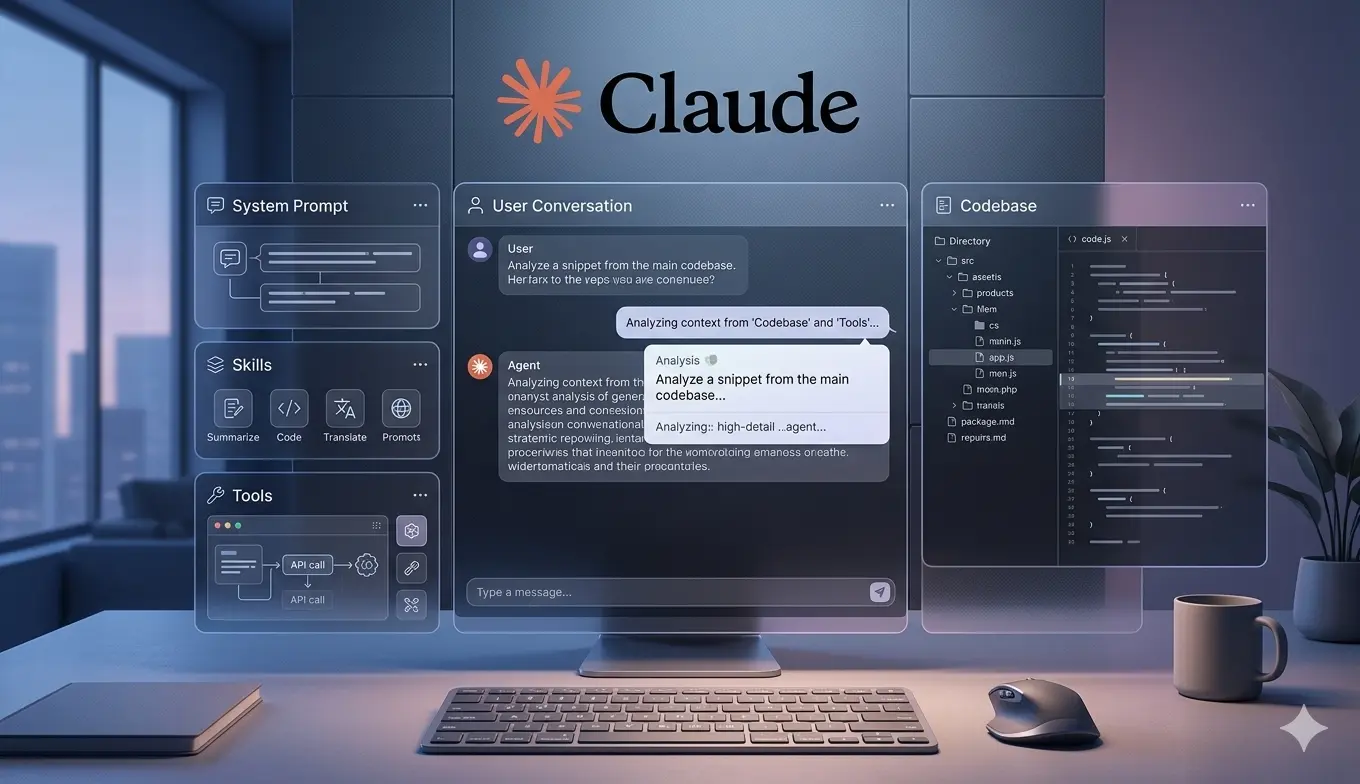

Before improving outputs, understand what the agent is working with. In most AI agent setups, context is assembled from several layers:

- System prompt from the model provider

- Persistent instruction files such as

agent.mdor similar - Available skills

- Tools the harness can call

- The codebase or working files

- The live user conversation

Outcome: You stop treating bad results like magic and start diagnosing where the agent is getting its guidance from.

Step 2: Keep Permanent Instructions Minimal

Most users do not need a large always-loaded instruction file. If the codebase already shows the framework, folder structure, or common patterns, the agent can often infer that on its own.

Use permanent instruction files only when the agent must know something on every turn, such as:

- Proprietary company rules

- Internal approval requirements

- Business-specific methods that apply to every task

- Non-obvious conventions that do not exist in the codebase

Outcome: Lower token usage, cleaner context, and less confusion during long sessions.

Step 3: Use Skills for Specific Workflows, Not General Knowledge

Skills work better than large instruction files because only the skill title and description are initially visible to the agent. The full contents are pulled in only when the skill is relevant.

That means a skill is ideal for workflows such as:

- Generating a report in a specific format

- Cleaning up code according to your preferred structure

- Researching sponsors or vendors using a fixed checklist

- Preparing content for Notion, Google Sheets, or another tool

Do not use a skill for something the model already knows, such as common frameworks, basic coding patterns, or generic writing rules.

Outcome: The agent gets detailed instructions only when needed, which preserves context and improves performance.

Step 4: Identify One Workflow Worth Automating

Start with a repeatable task that has a clear outcome. Good examples include:

- Reviewing inbound sponsor emails

- Generating a weekly analytics report

- Refactoring generated code into a preferred structure

- Checking contracts or financial summaries against a checklist

The key is to choose a workflow where right and wrong can be recognized clearly.

Outcome: You focus the agent on useful work instead of building abstract automation for no reason.

Step 5: Teach the Workflow by Running It Manually With the Agent

This is the step most people skip. Do not create the skill first. Instead, work through the task with the agent in real time.

Guide it step by step:

- Tell it exactly what to check first

- Review the result

- Correct the reasoning when it misses something

- Define what counts as a good or bad outcome

- Repeat until the workflow succeeds reliably

For example, if the task is sponsor research, you might instruct the agent to:

- Check the company website

- Review social profiles

- Look at customer reviews

- Check funding or company legitimacy signals

- Reject the company if multiple credibility signals fail

Outcome: The agent learns your actual workflow instead of guessing what you meant.

Step 6: Convert the Successful Run Into a Skill

Once the workflow has worked properly at least a few times, tell the agent to review what it just did and convert that process into a reusable skill.

A strong skill should include:

- A clear name

- A short description that tells the agent when to use it

- The exact sequence of steps

- Decision criteria

- Output format

- Error handling guidance where relevant

This is better than writing the skill from scratch because the skill is now based on a proven run, not a guess.

Outcome: You get a reusable workflow backed by real execution data.

Step 7: Improve the Skill Recursively

Even a good skill will fail sometimes. That is normal. The goal is not to avoid failure. The goal is to use failure to improve the workflow.

When the agent fails:

- Ask what went wrong

- Identify the specific error or missed step

- Guide it to fix the issue

- Tell it to update the skill so the same error is less likely next time

This creates a feedback loop where the skill gets stronger every time the agent encounters a real-world edge case.

Outcome: Your workflow becomes more reliable over time instead of staying fragile.

Step 8: Add Sub-Agents Only After the Core Workflow Works

Many users rush into multi-agent setups too early. A better sequence is:

- Start with one main agent

- Build one or more reliable skills

- Prove the workflow is productive

- Then split work across sub-agents where specialization makes sense

Good reasons to add sub-agents include:

- Different domains need separate workflows

- Tasks can run in parallel

- You already know what each agent should own

Outcome: You scale only when the process is stable enough to benefit from more automation.

Tools and Components That Matter Most

- Model quality: Strong current models are already capable, so workflow design matters more than over-explaining basics.

- Agent harness: The wrapper around the model affects tools, behavior, and output quality.

- Skills: Best for reusable, conditional workflows.

- Codebase or templates: A clean starting structure becomes useful context for the agent.

- User guidance: Your corrections and examples are the fastest way to improve reliability.

Optimization Tips for Better Claude Skills

- Use fewer always-on instructions: Save context for the live task.

- Do not explain obvious facts: If the codebase already shows React or another stack, skip it.

- Write skills from successful runs: Proven process beats imagined process.

- Keep skill descriptions specific: The agent should know exactly when to call the skill.

- Treat failures as training data: Each mistake can improve the next version of the skill.

- Prefer templates over bloated instructions: Good starting structure often helps more than long prompt files.

Where Value Is Created

The commercial value comes from reliability and repeatability.

- Where value is gained:

- Less token waste across long sessions

- More consistent output from proven workflows

- Faster execution of repeated business tasks

- Higher leverage from one agent before hiring more tools or people

- Where money is lost:

- Paying for large context files on every turn

- Building complex agent systems before a simple workflow works

- Using downloaded or generic skills that do not match your process

- Restarting failed tasks instead of improving the underlying skill

If a workflow saves time every week, reduces bad decisions, or standardizes outputs across the business, that is where the real ROI appears.

Final Takeaway

Better AI agent performance usually comes from better workflow design, not more prompt volume. Keep permanent context lean, teach the process through real runs, and turn proven workflows into skills.

Start with one useful task, build one strong skill, and improve it every time the agent fails. That is how you get from interesting demos to reliable business output.

This topic makes more sense when seen as part of a larger system. For the full framework, read Claude AI Agents: What They Are, How They Work, and How to Build Real Agent Systems.

Source

This article is based on content from Greg Isenberg.

Watch the original video here: Original YouTube episode on AI agents, context, and Claude skills